EGAS – Examiner Grading Aiding System

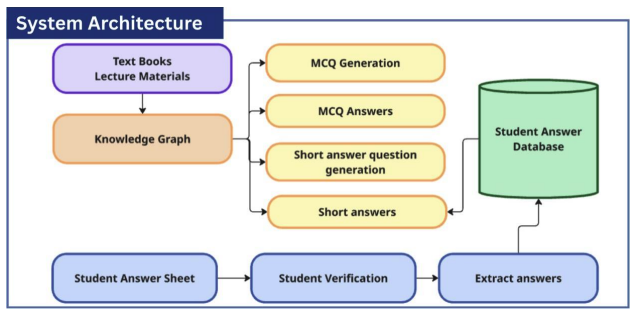

Overall System Architecture

A research-oriented grading assistant that combines graph-based retrieval with LLM reasoning to improve consistency and explainability in academic assessment.

The system addresses challenges in grading both handwritten and digital exam responses, particularly the time consumption, inconsistency, and identity verification issues faced in large-scale or online examinations. Traditional solutions often focus on isolated tasks such as handwriting recognition or automated grading, limiting their effectiveness.

The proposed system integrates multiple components into a unified framework. It first extracts handwritten responses using advanced recognition techniques and verifies student identity by comparing writing with registered handwriting profiles. Once validated, responses are evaluated using structured knowledge representations and knowledge graphs, enabling accurate comparison with expected answers.

The system also supports automated MCQ generation and grading using domain-specific knowledge graphs to produce contextually relevant questions. Additionally, short answer questions (SAQs) are generated from key concepts and definitions extracted from structured data sources.

By combining handwriting recognition, identity verification, knowledge graph construction, automated question generation, and LLM-based reasoning, the system provides a scalable, fair, and reliable assessment support tool for modern educational environments, especially in online examination scenarios requiring secure student authentication.

My Contribution

I designed and implemented the Automatic Short Answer Question (SAQ) Generation and Grading module of the system.

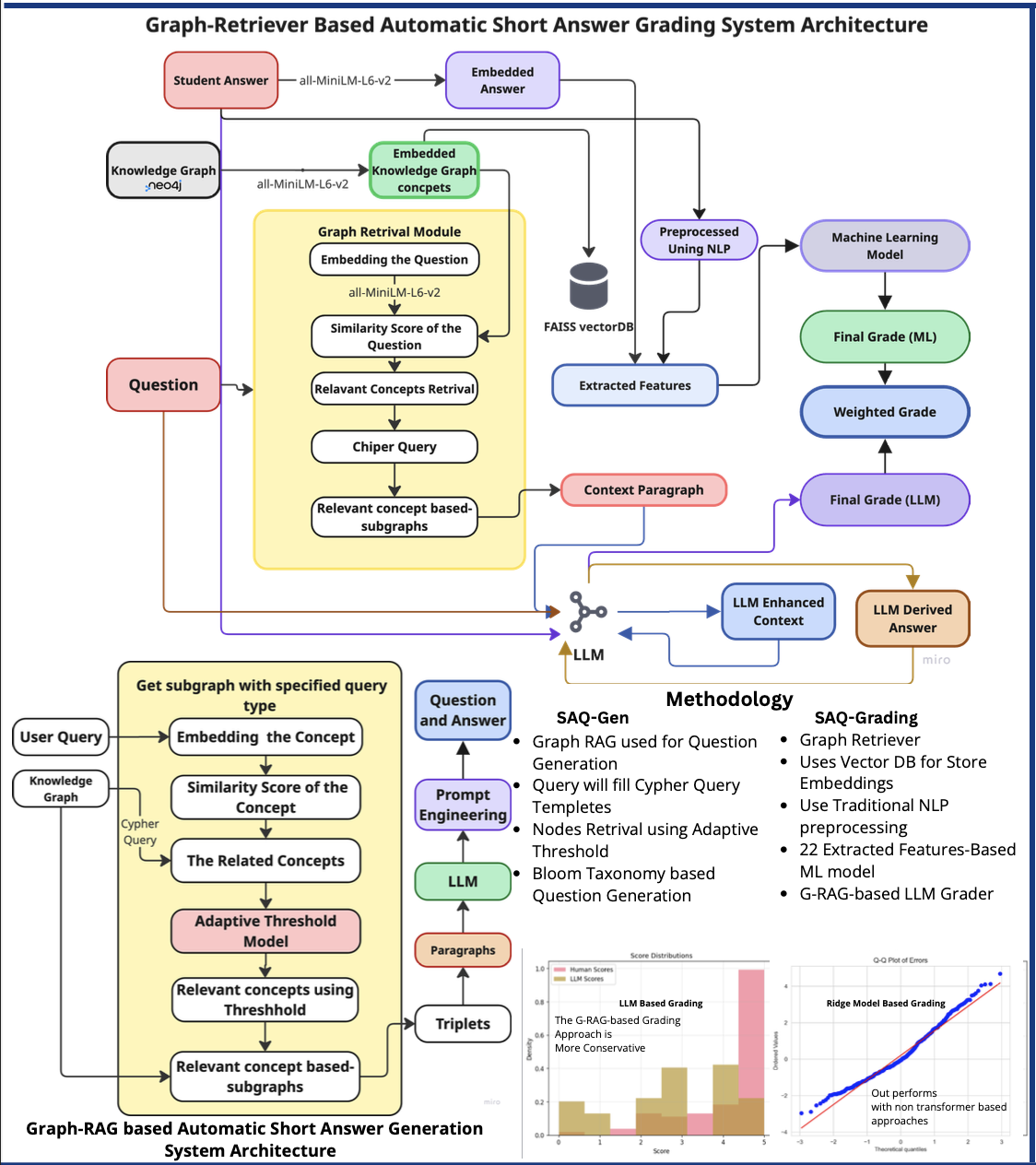

Architecture Diagram of SAQ generation and Grading

This component focuses on automatically generating short-answer questions from structured knowledge sources and evaluating student responses with context-aware reasoning.

Key Responsibilities

- Designed the SAQ generation pipeline using structured domain knowledge.

- Implemented Graph-Retrieval Augmented Generation (Graph-RAG) to generate context-grounded questions.

- Developed an automated grading mechanism that compares student answers against knowledge graph concepts.

- Integrated LLM-based reasoning to evaluate semantic similarity and correctness.

- Ensured grading outputs are consistent, explainable, and aligned with learning objectives.

Technical Overview

Automated grading and question generation systems have become increasingly critical in modern educational assessment to enhance efficiency, accuracy, and scalability. This study presents the Examiner Grading Aiding System (EGAS), an intelligent assessment framework designed to automate key grading and evaluation processes.

The system integrates the following components:

- Advanced Handwriting Recognition and Verification

- Automatic Knowledge Graph (KG) Construction

- Automated Multiple-Choice Question (MCQ) Generation and Grading

- Automated Short Answer Question (SAQ) Generation and Grading

The handwriting recognition module leverages Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs) to extract handwritten responses, while Siamese Neural Networks (SNNs) verify writer authenticity.

The Knowledge Graph Construction module employs:

- Large Language Models (LLMs)

- Named Entity Recognition (NER)

- Semantic similarity filtering techniques such as:

- Word2Vec

- Pointwise Mutual Information (PMI)

These techniques are used to extract and structure domain-specific knowledge.

For automated MCQ generation, a Retrieval-Augmented Generation (RAG) approach is implemented. This combines:

- KG subgraph extraction

- LLM-based reasoning

- BERT-based distractor selection

In addition, the Short Answer Question (SAQ) Generation and Grading module extends the system’s assessment capability.

This component employs a Graph-Retrieval Augmented Generation (Graph-RAG) approach to generate context-grounded and Bloom-aligned questions, while grading is performed through a hybrid strategy that combines machine learning with LLM-based evaluation.

The design ensures that:

- Questions remain semantically consistent with the knowledge graph

- Grading outputs are explainable

- The results remain pedagogically meaningful

Ultimately, the system is designed to support examiners as an assistive tool rather than a full replacement.

Stack

- Python

- TensorFlow

- LangChain

- Neo4J

- AWS Bedrock

- DeepSeek LLM API

Key Focus

- Retrieval quality for rubric-grounded grading support

- Explainable output generation

- Workflow reliability for evaluator review loops